Over the last year, Fishbowl Solutions has been working on creating a reusable and extendable accelerator for quickly developing and deploying Portals on top of the Oracle Content Management platform (formerly Oracle Content & Experience or OCE).

The accelerator frontend is built with Svelte/SapperJS and can be deployed to support either SSR or SPA as a Progressive Web Application.

Earlier this month, we enhanced the accelerator frontend to now support offline access to data exposed from OCM; and in this post I will show you how you can easily enhance the Sapper framework to provide offline application access via the Google Workbox library.

Why Google Workbox?

The Sapper framework already comes with a built-in service worker for creating a performant core application. However, we wanted more, and Google’s Workbox library provides us with just that – Precaching, Runtime Caching, Strategies, Request Routing, Background Sync, and an easy-to-debug response.

How do I integrate Sapper and Workbox?

There are several approaches you can use: Rollup or Webpack for building a precache, but Sapper handles a lot of what you need right out of the box.

At a high level – create a new Sapper project and install the workbox library to your new project.

Open up the Sapper service-worker script ‘/src/service-worker.js’

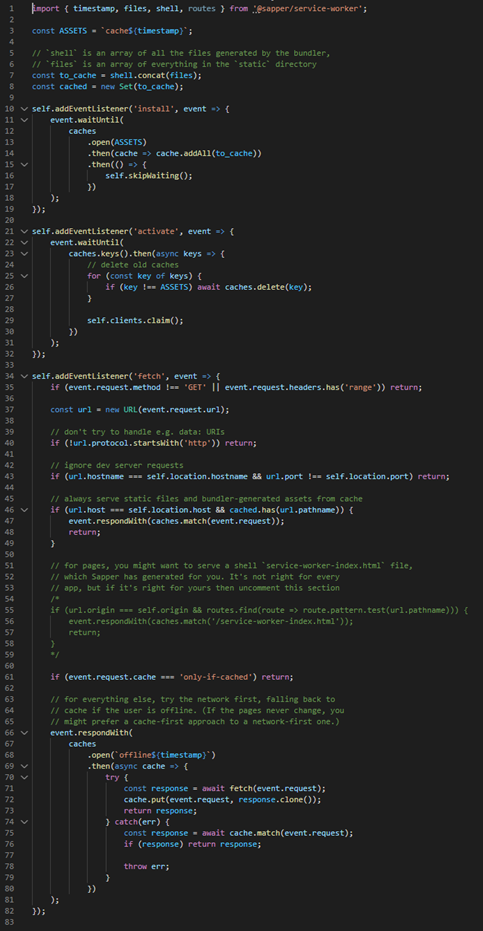

It should look something like this:

Let’s first import the workbox scripts we will need.

import { createHandlerBoundToURL, precacheAndRoute } from ‘workbox-precaching’;

import { NavigationRoute, registerRoute} from ‘workbox-routing’;

import { CacheFirst, StaleWhileRevalidate} from ‘workbox-strategies’;

import { ExpirationPlugin } from ‘workbox-expiration’;

If we take a look at line 1:

import { timestamp, files, shell, routes } from ‘@sapper/service-worker’;

‘timestamp’ – This provides us with the timestamp of the build.

‘files’ – Access to the assets that are compressed and compiled by Webpack or Rollup.

‘shell’ – Access to the assets held within the static folder that are not touched.

‘routes’ – All the routes that available as part of the routing model.

This is great because we have all the key elements required to easily tie into the workbox library.

Create an array of assets to cache with the workbox

Let’s start by caching the generated assets held within files.

const cacheAssets = [];

shell.forEach((asset) => {

cacheAssets.push({

url: `/${asset}`,

revision: null

});

});

`revision` key can stay as null as the filename is unique when it is generated.

Next let’s cache all the assets held within the static folder that remain untouched. You can add your own logic here if there are assets you don’t want to cache.

const ASSETS = `cache${timestamp}`;

files.forEach((asset,i) => {

cacheAssets.push({

url: `/${asset}`,

revision: ASSETS,

});

});

Every time I create a new build I want to make sure all the assets within the `/static` folder are refreshed – so we apply the timestamp as the revision value against the URL. This will force refresh the asset served to the end user.

Create an array of routes to cache

Next is route caching as the user navigates. I want to cache the route so that when the user is offline, 200 is returned allowing the user to access the route path.

const cacheRoutes = [];

routes.forEach((route) => {

cacheRoutes.push(new RegExp(route.pattern));

});

Routes are passed as an expression within the array.

We now have 2 arrays: ‘cacheAssets’ and ‘cacheRoutes’. To precache the assets, we will use the precacheAndRoute method like this:

precacheAndRoute(cacheAssets, {

directoryIndex: null,

});

The `directoryIndex` I’ve reset as I want to define it as the service worker shell for offline access. To do that, we bind the service worker shell that was created when we generated the project within ‘/static/service-worker-index.html’.

From there, we then apply the routes that we generated (‘cacheRoutes’) to the shell; if there are any routes that you bank to block from the cache you can add them to the `denylist` array.

const handler = createHandlerBoundToURL(‘/service-worker-index.html’);

const navigationRoute = new NavigationRoute(handler, {

allowlist: cacheRoutes,

//denylist: [

// new RegExp(‘/blog/restricted/’),

//],

});

registerRoute(navigationRoute);

Cache external https requests

Finally, we want to also cache any requests we make outside of the app to OCM.

To do that, we register the external routes by checking against the origin domain like the below. I am checking across 3 different domains: dev, uat, and prod and applying a custom cache name – which allows me to easily check which requests have been cached.

registerRoute(

({url}) => ((url.origin === ‘https://oce-dev.oracle.com/’) || (url.origin === ‘https://oce-uat.oracle.com/’) || (url.origin === ‘https://oce.oracle.com/’)),

new StaleWhileRevalidate({

cacheName: ‘oce’,

})

);

Note: At the time of writing this, Sapper currently does not expose the generated CSS files from its files array. There is an open merge request waiting to be reviewed and pulled in to support this. So, if you want your offline app to be styled you will also need to add the following:

registerRoute(

/\.(?:css)$/,

new CacheFirst({

cacheName: ‘css’,

plugins: [

new ExpirationPlugin({

maxEntries: 60,

maxAgeSeconds: 30 * 24 * 60 * 60, // 30 Days

}),

],

}),

);

This will capture and cache all the CSS files.

If you would like, you could use the same approach for images with this:

registerRoute(

/\.(?:png|gif|jpg|jpeg|svg)$/,

new CacheFirst({

cacheName: ‘images’,

plugins: [

new ExpirationPlugin({

maxEntries: 60,

maxAgeSeconds: 30 * 24 * 60 * 60, // 30 Days

}),

],

}),

);

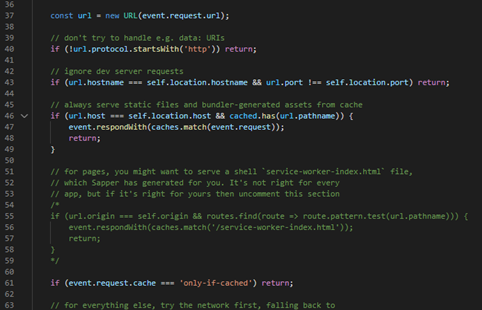

Final output

When finished, your new service worker should look similar to this:

import { timestamp, files, shell, routes } from ‘@sapper/service-worker’;

import { createHandlerBoundToURL, precacheAndRoute } from ‘workbox-precaching’;

import { NavigationRoute, registerRoute} from ‘workbox-routing’;

import { CacheFirst, StaleWhileRevalidate} from ‘workbox-strategies’;

import { ExpirationPlugin } from ‘workbox-expiration’;

const ASSETS = `cache${timestamp}`;

const cacheAssets = [];

shell.forEach((asset) => {

cacheAssets.push({

url: `/${asset}`,

revision: null

});

});

files.forEach((asset,i) => {

cacheAssets.push({

url: `/${asset}`,

revision: `${i}_${ASSETS}`,

});

});

const cacheRoutes = [];

routes.forEach((route) => {

cacheRoutes.push(new RegExp(route.pattern));

});

precacheAndRoute(cacheAssets, {

directoryIndex: null,

});

const handler = createHandlerBoundToURL(‘/service-worker-index.html’);

const navigationRoute = new NavigationRoute(handler, {

allowlist: cacheRoutes,

//denylist: [

// new RegExp(‘/blog/restricted/’),

//],

});

registerRoute(navigationRoute);

//ext images

registerRoute(

/\.(?:png|gif|jpg|jpeg|svg)$/,

new CacheFirst({

cacheName: ‘images’,

plugins: [

new ExpirationPlugin({

maxEntries: 60,

maxAgeSeconds: 30 * 24 * 60 * 60, // 30 Days

}),

],

}),

);

registerRoute(

/\.(?:css)$/,

new CacheFirst({

cacheName: ‘css’,

plugins: [

new ExpirationPlugin({

maxEntries: 60,

maxAgeSeconds: 30 * 24 * 60 * 60, // 30 Days

}),

],

}),

);

//oce queries

registerRoute(

({url}) => ((url.origin === ‘https://oce-dev.oracle.com/’) || (url.origin === ‘https://oce-uat.oracle.com/’) || (url.origin === ‘https://oce.oracle.com/’)),

new StaleWhileRevalidate({

cacheName: ‘oce’,

})

);

Summary

With that done you now have a website or application running on top of sapper & workbox that supports offline access. You may still need to implement solutions for syncing contribution of content when the user reconnects however the consumption of assets from OCE or the Akamai CDN cache will now stored for quick offline access from your browser.

Still have questions? Contact us via the submission form below to learn more and see how Fishbowl Solutions can help implement a solution involving Oracle Content Management Enterprise CMS platform.

0 Comments